Thanks to the gradual maturation of Istio over its last few of releases, it is now possible to run control plane components without root privileges. We often use Pod Security Policies (PSPs) in Kubernetes to ensure that pods run with only restricted privileges.

In this post, we’ll discuss how to run Istio’s control plane components with as few privileges as possible, using restricted PSPs and the open source Banzai Cloud Istio operator.

Introduction 🔗︎

In order to accomplish what we’re setting out to do here - guarantee that Istio control plane components use the fewest privileges possible - we recommended using the Istio CNI plugin. To enforce the restricted privileges in question, we recommended you use Pod Security Policies.

Before we get started, let’s do a brief overview of these two concepts: Istio CNI and Pod Security Policies:

Istio CNI 🔗︎

The problem 🔗︎

By default, Istio uses an injected initContainer called istio-init to create iptables rules before the other containers in the pod can start.

This requires the user, or service-account that’s deploying pods in the mesh, to have sufficient privileges to deploy containers with NET_ADMIN capability - a kernel capability that allows the reconfiguration of networks.

In general, we want to avoid giving application pods this capability.

The solution 🔗︎

One way of mitigating this issue is to take iptables’ rules configuration out of the pod using a CNI plugin.

CNI (Container Network Interface), a Cloud Native Computing Foundation project, consists of a specification and libraries for writing plugins to configure network interfaces in Linux containers, along with a number of supported plugins.

The Istio CNI plugin replaces the istio-init container, which provides the same functionality, but without requiring Istio users to enable elevated privileges.

It performs traffic redirection in the setup phase of the Kubernetes pod’s lifecycle, thereby removing the NET_ADMIN capability requirement for users deploying pods to the mesh.

For a more detailed explanation on how Istio CNI works, check out our Enhancing Istio service mesh security with a CNI plugin blog post.

Pod Security Policies 🔗︎

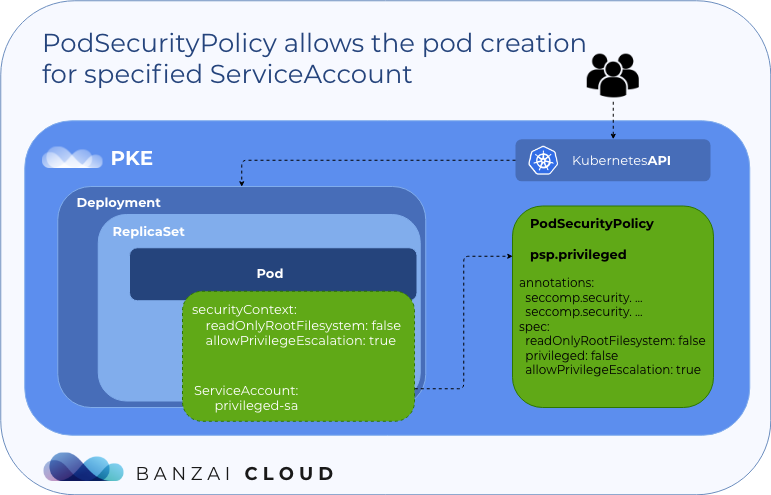

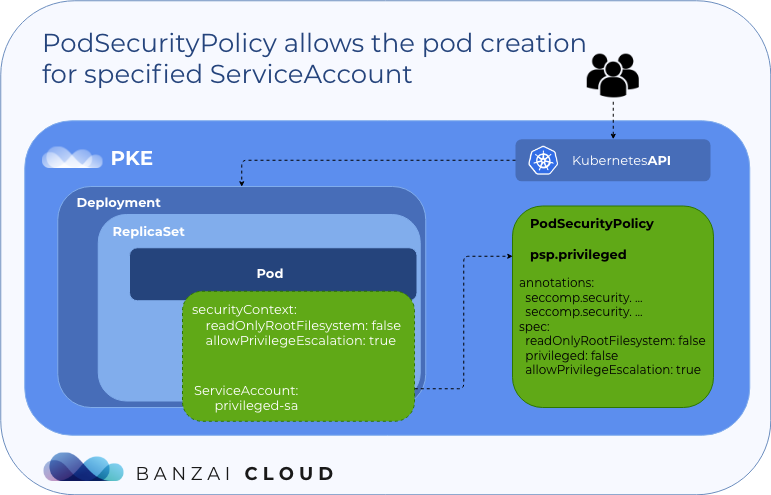

Pod Security Policies provide fine-grained authorization for pod creation.

Let’s try to quickly summarize how they work:

Note: You must enable the PSP admission controller in your Kubernetes cluster to use PSPs.

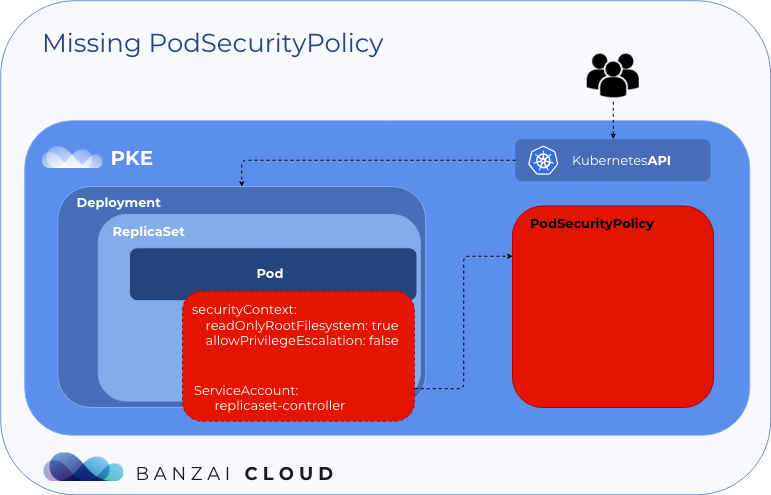

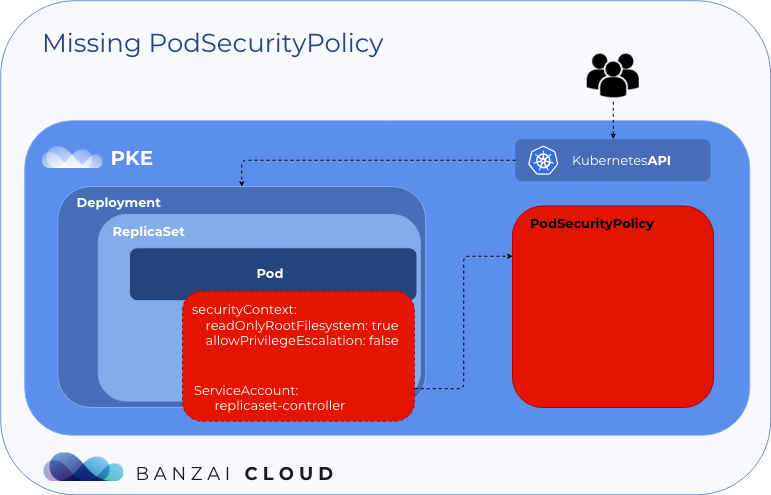

Missing Pod Security Policy 🔗︎

If you try to create a pod without a PSP resource attached to its Service Account (by using Role and RoleBinding), then the admission controller will deny the pod’s creation.

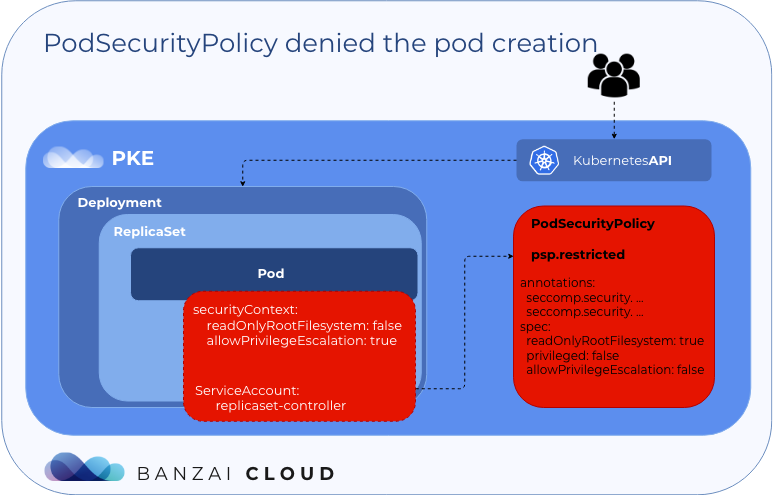

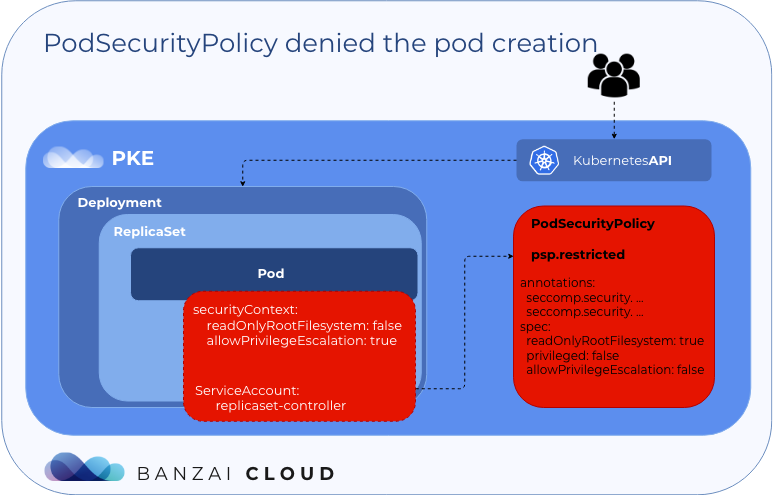

Denied by Pod Security Policy 🔗︎

If you try to create a pod with a PSP resource attached to its Service Account, but the pod does not satisfy the constraints set in the attached PSP resource, then the admission controller will deny the pod’s creation.

Allowed by Pod Security Policy 🔗︎

If you try to create a pod with a PSP resource attached to its Service Account and the pod satisfies the constraints set in the attached PSP resource, then the admission controller will allow the pod to be created.

This is the gist of PSPs, but you can find a more in-depth explanation of how they work in an earlier blog post, which includes working examples and a detailed list of the security aspects used to constrain them: An illustrated deepdive into Pod Security Policies.

Deploy Istio with least privileges 🔗︎

Brief history 🔗︎

In Istio 1.5 the Istio control plane was redesigned as a monolith, and istiod was introduced.

Per that release, SDS was also simplified and the NodeAgent component began to undergo deprecation.

Istio 1.6 continued to make architectural changes by removing all the legacy control plane components.

With these alterations, by the time of Istio 1.6’s release, there were many fewer control plane components to secure.

In the meantime, further efforts were made to run the remaining components without root privileges (e.g. root port ranges and root file paths ceased to be used). Let’s take a look at the remaining Istio components in Istio 1.6.

Components with fewer privileges 🔗︎

These are the components that are run without root privileges by default in Istio 1.6:

istiodistio-corednsistio-telemetryistio-policyistio-proxysidecar container

It’s now also possible to run istio-gateway deployments without root privileges.

Instead of the istio-init container, the Istio CNI plugin can be used in such a way that the NET_ADMIN capability can be dropped.

These are the bare minimum privileges necessary for the remaining components. Let’s take a look at how we can enforce them with PSPs.

Restrict with PSPs 🔗︎

For all the control plane components (except for the CNI) we can use the following restricted PSP to make sure that the they run only as non-root users.

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp-restricted

spec:

allowPrivilegeEscalation: false

forbiddenSysctls:

- '*'

fsGroup:

ranges:

- max: 65535

min: 1

rule: MustRunAs

requiredDropCapabilities:

- ALL

runAsUser:

rule: MustRunAsNonRoot

runAsGroup:

rule: MustRunAs

ranges:

- min: 1

max: 65535

seLinux:

rule: RunAsAny

supplementalGroups:

ranges:

- max: 65535

min: 1

rule: MustRunAs

volumes:

- configMap

- emptyDir

- projected

- secret

- downwardAPI

- persistentVolumeClaim

The CNI component needs a different PSP, since it needs access to the host network and host path volumes, so we’ll use this one:

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp-host

spec:

allowPrivilegeEscalation: true

fsGroup:

rule: RunAsAny

hostNetwork: true

runAsUser:

rule: RunAsAny

seLinux:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

volumes:

- secret

- configMap

- emptyDir

- hostPath

With this setup (gateways run as non-root, CNI, PSPs), we can make sure that when the Istio control plane components run, they do so with alleviated privileges.

All the necessary resources, including Roles and RoleBindings, for this setup can be found in the Banzai Cloud Istio operator repo, here.

Try it out! 🔗︎

In this section, we’ll setup an Istio mesh with the minimum necessary privileges by using the Banzai Cloud Istio operator.

Create cluster 🔗︎

First, let’s create a GKE cluster with Network Policy enabled (for CNI) and pod security policy enabled (for PSP):

$ gcloud beta container clusters create psp-demo --enable-network-policy --enable-pod-security-policy --cluster-version=1.16.9-gke.2 --node-version=1.16.9-gke.2 --num-nodes=5

NAME LOCATION MASTER_VERSION MASTER_IP MACHINE_TYPE NODE_VERSION NUM_NODES STATUS

psp-demo europe-west1-b 1.16.9-gke.2 35.187.123.4 n1-standard-1 1.16.9-gke.2 5 RUNNING

Deploy the Banzai Cloud Istio operator with PSP 🔗︎

We will use Kustomize to deploy the operator and the necessary PSP resources to the cluster.

Create a kustomization.yaml file with the following content in any directory:

bases:

- github.com/banzaicloud/istio-operator/config?ref=release-1.6

- github.com/banzaicloud/istio-operator/config/overlays/psp?ref=release-1.6

Apply the resources to your cluster based on that file:

$ kubectl apply -k .

namespace/istio-system created

customresourcedefinition.apiextensions.k8s.io/istios.istio.banzaicloud.io created

customresourcedefinition.apiextensions.k8s.io/meshgateways.istio.banzaicloud.io created

customresourcedefinition.apiextensions.k8s.io/remoteistios.istio.banzaicloud.io created

role.rbac.authorization.k8s.io/istio-operator-psp-host created

role.rbac.authorization.k8s.io/istio-operator-psp-restricted created

clusterrole.rbac.authorization.k8s.io/istio-operator-manager-role created

rolebinding.rbac.authorization.k8s.io/istio-operator-psp-host created

rolebinding.rbac.authorization.k8s.io/istio-operator-psp-restricted created

clusterrolebinding.rbac.authorization.k8s.io/istio-operator-manager-rolebinding created

service/istio-operator-controller-manager-service created

statefulset.apps/istio-operator-controller-manager created

podsecuritypolicy.policy/istio-operator-psp-host created

podsecuritypolicy.policy/istio-operator-psp-restricted created

Note: If you don’t want to use Kustomize, you can deploy the operator through whichever documented method you prefer, and then you can create your own PSP resources tailored to your specific needs.

Deploy Istio with CNI 🔗︎

Create the Istio CR with the Istio CNI enabled. The Istio operator will create the Istio mesh based on this CR.

$ kubectl -n istio-system apply -f https://raw.githubusercontent.com/banzaicloud/istio-operator/release-1.6/config/samples/istio_v1beta1_istio_cni_gke.yaml

istio.istio.banzaicloud.io/istio-sample created

After a while, your Istio mesh should be up and running:

$ kubectl get po -n=istio-system

NAME READY STATUS RESTARTS AGE

istio-cni-node-8kxsm 2/2 Running 0 3m3s

istio-cni-node-jx7k6 2/2 Running 0 3m2s

istio-cni-node-mqgkn 2/2 Running 0 3m3s

istio-cni-node-pdz5q 2/2 Running 0 3m3s

istio-cni-node-zp98z 2/2 Running 0 3m2s

istio-ingressgateway-5c5b6c7fdd-bqwn7 1/1 Running 0 2m32s

istio-operator-controller-manager-0 1/1 Running 0 11m

istiod-76fb86d68f-tf5rv 1/1 Running 0 3m4s

Validating restricted PSPs 🔗︎

Let’s make sure that our PSP works by modifying a flag in the Istio CR and trying to run the istio-ingressgateway pod as root:

kubectl patch istio -n istio-system istio-sample --type=json -p='[{"op": "replace", "path": "/spec/gateways/ingress/runAsRoot", "value":true}]'

After a few seconds you should see that the new istio-ingressgateway pod is unable start:

$ kubectl get po -n=istio-system -l app=istio-ingressgateway

NAME READY STATUS RESTARTS AGE

istio-ingressgateway-5c5b6c7fdd-bqwn7 1/1 Running 0 3m32s

istio-ingressgateway-5d979f4dd4-jqlgq 0/1 CreateContainerConfigError 0 106s

Let’s see why:

$ kubectl describe po -n=istio-system -l app=istio-ingressgateway

Warning Failed 6s (x10 over 118s) kubelet, gke-psp-demo-default-pool-7528dec2-kww1 Error: container has runAsNonRoot and image will run as root

The PSP admission controller will not allow the pod to start as root because of the restricted PSP resource we’ve already created with Kustomize.

Let’s change the runAsRoot flag back to false so that we can proceed with our demonstration:

kubectl patch istio -n istio-system istio-sample --type=json -p='[{"op": "replace", "path": "/spec/gateways/ingress/runAsRoot", "value":false}]'

Deploy BookInfo 🔗︎

For BookInfo pods we need to bind a PSP resource such that the PSP admission controller will allow us to create them.

Copy the following resources to a file named bookinfo-psp.yaml:

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: istio

spec:

fsGroup:

rule: RunAsAny

runAsUser:

rule: RunAsAny

seLinux:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

volumes:

- '*'

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: istio

rules:

- apiGroups:

- policy

resourceNames:

- istio

resources:

- podsecuritypolicies

verbs:

- use

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: istio-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: istio

subjects:

- kind: ServiceAccount

name: bookinfo-productpage

namespace: default

- kind: ServiceAccount

name: bookinfo-details

namespace: default

- kind: ServiceAccount

name: bookinfo-ratings

namespace: default

- kind: ServiceAccount

name: bookinfo-reviews

namespace: default

Apply the resources based on that file:

kubectl apply -f bookinfo-psp.yaml

podsecuritypolicy.policy/istio created

clusterrole.rbac.authorization.k8s.io/istio created

clusterrolebinding.rbac.authorization.k8s.io/istio-user created

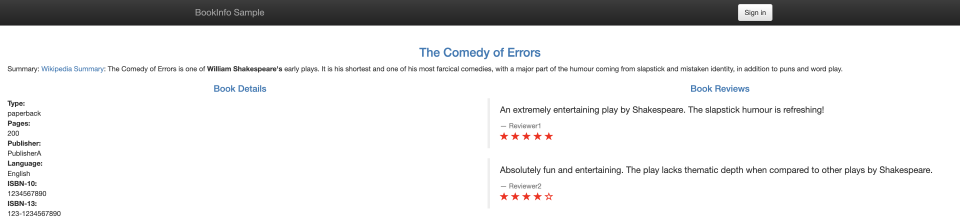

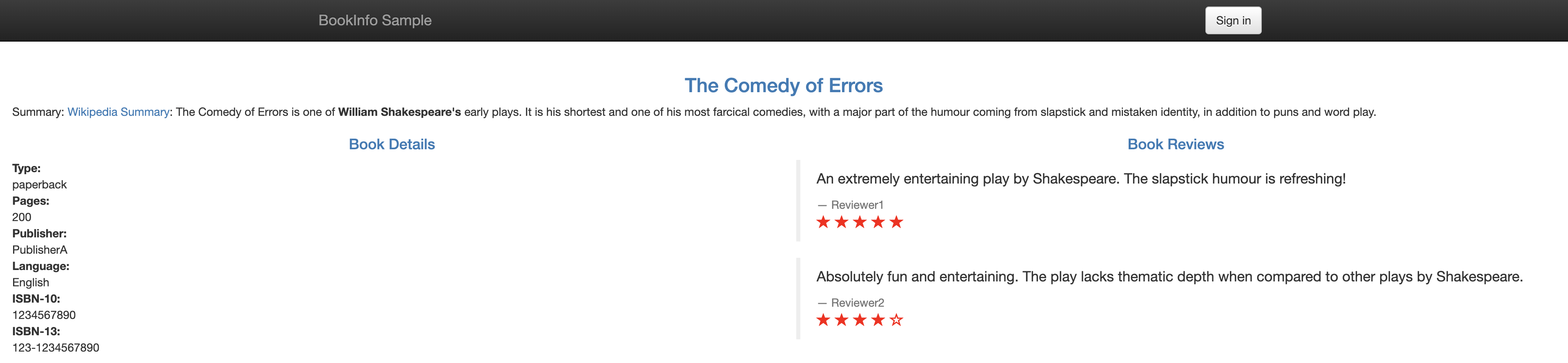

Run the following commands to deploy the BookInfo application, expose it through an ingress gateway and open its UI in a browser:

kubectl -n default apply -f https://raw.githubusercontent.com/istio/istio/release-1.6/samples/bookinfo/platform/kube/bookinfo.yaml

kubectl -n default apply -f https://raw.githubusercontent.com/istio/istio/release-1.6/samples/bookinfo/networking/bookinfo-gateway.yaml

INGRESS_HOST=$(kubectl -n istio-system get service istio-ingressgateway -o jsonpath='{.status.loadBalancer.ingress[0].ip}')

open http://$INGRESS_HOST/productpage

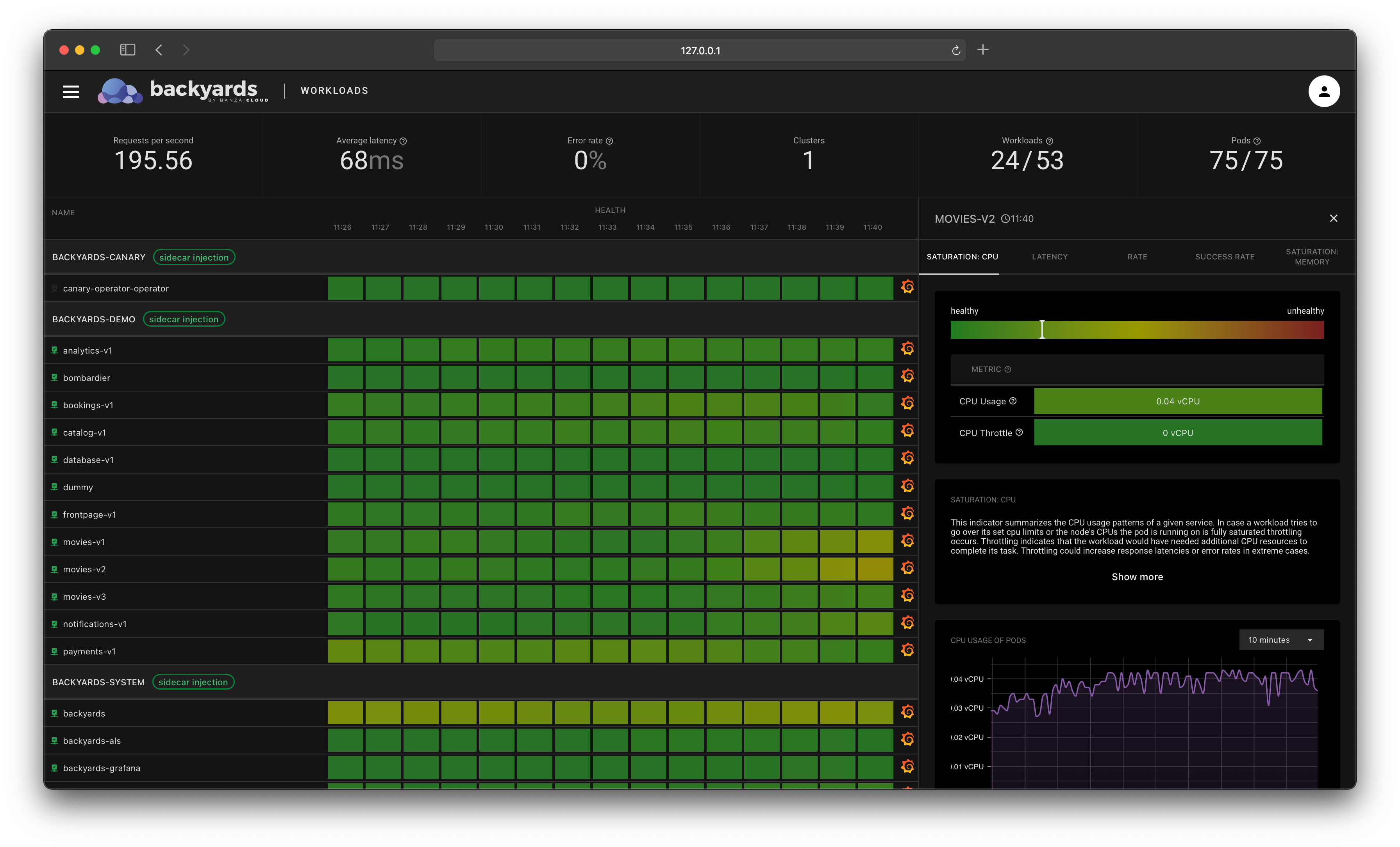

After the pods start successfully, the application should be up and running:

At this point, your mesh is functioning with the minimum necessary privileges, enforced by your freshly created PSPs.

Cleanup 🔗︎

To delete the BookInfo application, the Istio control plane and the Istio operator from your cluster, run the following commands:

kubectl -n default delete -f https://raw.githubusercontent.com/istio/istio/release-1.6/samples/bookinfo/networking/bookinfo-gateway.yaml

kubectl -n default delete -f https://raw.githubusercontent.com/istio/istio/release-1.6/samples/bookinfo/platform/kube/bookinfo.yaml

kubectl -n default delete -f bookinfo-psp.yaml

kubectl -n istio-system delete -f https://raw.githubusercontent.com/banzaicloud/istio-operator/release-1.6/config/samples/istio_v1beta1_istio_cni_gke.yaml

kubectl delete -k .

Takeaway 🔗︎

As you can see, with the open source Banzai Cloud Istio operator, it’s possible to setup an Istio mesh with the bare minimum privileges necessary to run Istio’s control plane components, and which enforces those constraints by using restricted PSPs.