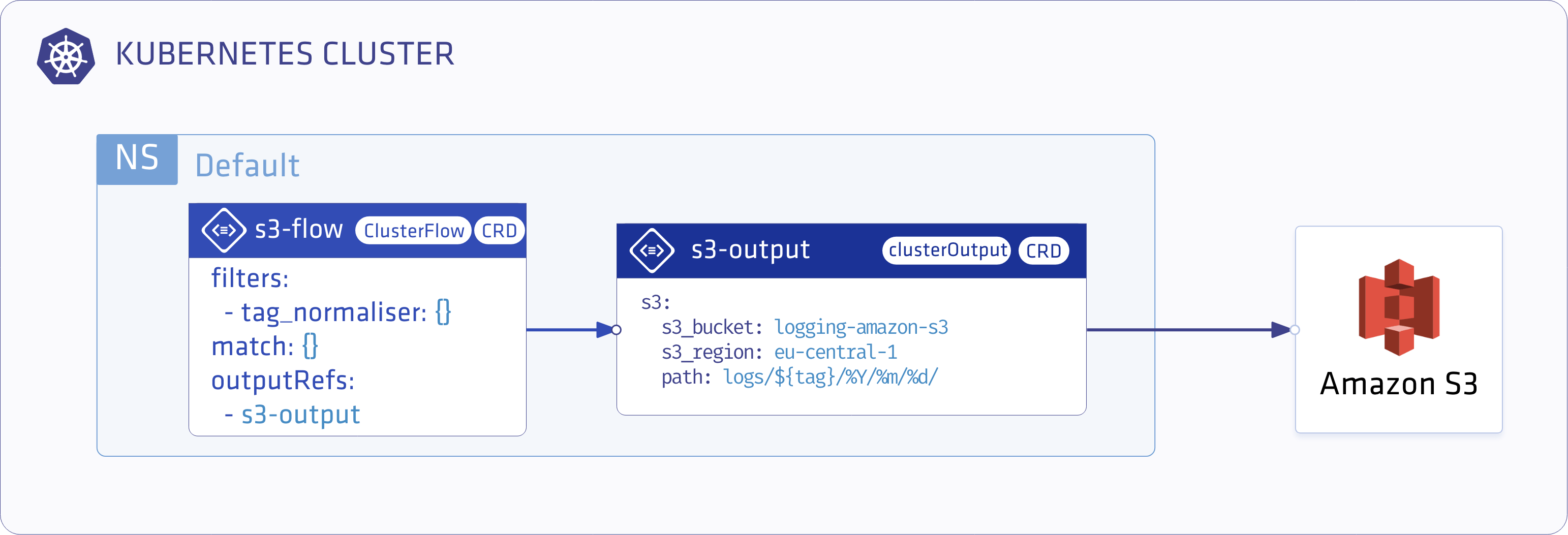

This guide describes how to collect all the container logs in Kubernetes using the Logging operator, and how to send them to Amazon S3.

The following figure gives you an overview about how the system works. The Logging operator collects the logs from the application, selects which logs to forward to the output, and sends the selected log messages to the output. For more details about the Logging operator, see the Logging operator overview.

Deploy the Logging operator 🔗︎

Install the Logging operator.

Deploy the Logging operator with Helm 🔗︎

To install the Logging operator using Helm, complete these steps.

Note: For the Helm-based installation you need Helm v3.2.1 or later.

-

Add the chart repository of the Logging operator using the following commands:

helm repo add banzaicloud-stable https://kubernetes-charts.banzaicloud.com helm repo update -

Install the demo application and its logging definition.

helm upgrade --install --wait --create-namespace --namespace logging logging-demo banzaicloud-stable/logging-demo \ --set "loki.enabled=True"

Configure the Logging operator 🔗︎

-

Create logging

Namespacekubectl create ns logging -

Create AWS

secretIf you have your

$AWS_ACCESS_KEY_IDand$AWS_SECRET_ACCESS_KEYset you can use the following snippet.kubectl -n logging create secret generic logging-s3 --from-literal "awsAccessKeyId=$AWS_ACCESS_KEY_ID" --from-literal "awsSecretAccessKey=$AWS_SECRET_ACCESS_KEY"Or set up the secret manually.

kubectl -n logging apply -f - <<"EOF" apiVersion: v1 kind: Secret metadata: name: logging-s3 type: Opaque data: awsAccessKeyId: <base64encoded> awsSecretAccessKey: <base64encoded> EOF -

Create the

loggingresource.kubectl -n logging apply -f - <<"EOF" apiVersion: logging.banzaicloud.io/v1beta1 kind: Logging metadata: name: default-logging-simple spec: fluentd: {} fluentbit: {} controlNamespace: logging EOFNote: You can use the

ClusterOutputandClusterFlowresources only in thecontrolNamespace. -

Create an S3

outputdefinition.kubectl -n logging apply -f - <<"EOF" apiVersion: logging.banzaicloud.io/v1beta1 kind: Output metadata: name: s3-output namespace: logging spec: s3: aws_key_id: valueFrom: secretKeyRef: name: logging-s3 key: awsAccessKeyId aws_sec_key: valueFrom: secretKeyRef: name: logging-s3 key: awsSecretAccessKey s3_bucket: logging-amazon-s3 s3_region: eu-central-1 path: logs/${tag}/%Y/%m/%d/ buffer: timekey: 10m timekey_wait: 30s timekey_use_utc: true EOFNote: In production environment, use a longer

timekeyinterval to avoid generating too many objects. -

Create a

flowresource.kubectl -n logging apply -f - <<"EOF" apiVersion: logging.banzaicloud.io/v1beta1 kind: Flow metadata: name: s3-flow spec: filters: - tag_normaliser: {} match: - select: labels: app.kubernetes.io/name: log-generator localOutputRefs: - s3-output EOF

Validate the deployment 🔗︎

Check the output. The logs will be available in the bucket on a path like:

/logs/default.default-logging-simple-fluentbit-lsdp5.fluent-bit/2019/09/11/201909111432_0.gz

If you don’t get the expected result you can find help in the troubleshooting section.